TinyML FOMO: Detection of Rice Varieties Using Edge Impulse

by shahizat in Circuits > Cameras

1510 Views, 4 Favorites, 0 Comments

TinyML FOMO: Detection of Rice Varieties Using Edge Impulse

It is a well known fact that, Rice is the one of the most important cereal crop worldwide. There are many different rice varieties world due to its diverse climatic and soil features. And it has led to the rapidly spreading practice of rice falsification. Therefore, establishing a method to reliably and accurately identify rice varieties using modern computer vision algorithms is urgently needed. There are usually features such as texture, shape, and color. With these features that distinguish rice varieties, it is possible to classify and identify rice varieties.

In this project, a computer vision system was developed using Edge Impulse's FOMO(Faster Objects, More Objects)algorithm in order to distinguish between two rice species(Jasmine and Basmati). Jasminerice is originally from Thailand, while Basmati comes from India and Pakistan. This project is intended for those who are planning to scale their POC(proof of concept).

This project consists of four phases:

- Data gathering and labeling

- Training the model using Edge Impulse FOMO algorithm.

- Deployment and Inference of the rice varieties detection vision system on the NVIDIA Jetson Xavier NX Developer Kit

- Deployment and Inference of the rice varieties detection vision system on the OpenMV Cam H7 Plus

Supplies

You will need the following hardware to complete this tutorial:

- Development board officially supported by Edge Impulse platform. Here I have used the OpenMV H7 Plus and NVIDIA Jetson Xavier NX Developer Kit.

- USB WebCam for use with real-time applications. For live camera demonstrations, cameras like the Raspberry Pi Camera module are required. Here I’ll be using Arducam Complete High Quality Camera Bundle.

- OpenMV IDE. Navigate toOpenMV official website. Download and install the latest OpenMV IDE.

- Edge Impulse account.

For simplicity we will assume everything is installed. So take a look at the requirements before starting the tutorial.

Create and Train the Model in Edge Impulse

As you already know, with Edge Impulse SaaS-based machine learning platform, developers can rapidly develop and deploy sensor, audio and computer vision applications for their different applications. Edge Impulse is a great platform for non-coders to develop machine learning models without any knowledge of any programming language.

So, let's get started.

- Go to Edge Impulse plaform,enter your credentials at Login (or create an account), and start a new project.

- Select Images option.

- Then click Classify Multiple Objects option.

- Download a face rice image dataset from Kaggle by Murat Koklu. The image data set has 5 classes, we need only 2 classes(Jasmine and Basmati). We should collect enough images for each of our classes.

- Click Go to the uploader button.

- Upload images. In my case, I uploaded 600 images in total with 300 images for each class(basmati and jasmine).

- You can also add more items by labeling and uploading in Data Acquisition and retraining the model.

- Labelling images for object detection is pretty straightforward. We need to annotate the images using Edge Impulse annotation tool.

- After finishing with the image labeling for the different classes, the next step is to split them into a group for training and another for testing.

- Once you have your dataset ready, go to Create Impulse section.

- The captured image first gets resized to 96x96, it helps in keeping the final model small in size.

- Rename it to Rice Varieties detection. Click the Save Impulse button.

- Go to Image section. Select color depth as Grayscale features. Fundamental difference between FOMO and the standard Object Detection is that FOMO uses Grayscale features. Then press Save parameters.

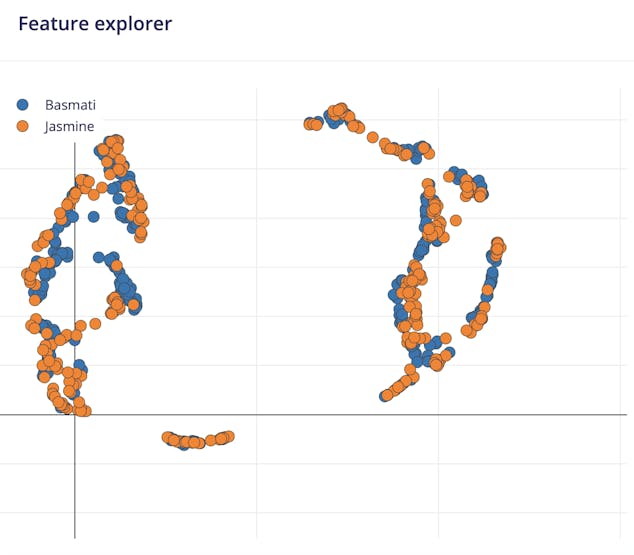

- Finally, click on the Generate features button. You should get a response that looks like the one below.

- Navigate to Rice Varieties detection section.

- Leave hyper parameters set to default values. In our case, we are going to take the MobileNetV2 0.35 as it is a quite balanced pre-trained model. Mobilenet itself is a convolutional neural network made specifically for mobile and embedded devices. Train the model by pressing the Start training button. it might take up to 15 minutes to trains the model. If everything goes correctly, you should see the following output in the Edge Impulse.

The .tflite file is our model. The final Quantized model file(int8) is around 60KB in size and it achieved an accuracy almost 98%. This quantized model is expected to take around 435ms in inference time, using 243.9Kb in RAM and 77.6Kb in ROM. You can use a program like Netron to view the neural network.

We can now test our trained model. For that click on the Live classification tab in the left-hand menu and test it.

After the model is tested and trained, the next step is the deployment on to edge devices. So, I bought two bags of Jasmine and Basmati rice from a local market. Fortunately, I was able to find both of these rice varieties.

Let's get it running on our embedded devices!

Deploying the Trained Model to NVIDIA Jetson Board

Once the training process is complete, we can deploy the trained Edge impulse object detection model to Nvidia Jetson board. If you are going to use a CSI camera for object detection, you should connect it to Nvidia Jetson board before powering it up.

In order to use Edge Impulse on the Nvidia Jetson boards, you first have to install Edge Impulse and its dependencies on it.

wget -q -O - https://cdn.edgeimpulse.com/firmware/linux/jetson.sh | bash

Now, use the below command to run Edge Impulse:

edge-impulse-linux

You will be asked to log in to your Edge Impulse account. You’ll then be asked to choose a project, and finally to select a microphone and camera to connect to the project.

Edge Impulse Linux client v1.3.3

WARN: You're running an outdated version of the Edge Impulse CLI tools

Upgrade via `npm update -g edge-impulse-linux`

Stored token seems invalid, clearing cache...

? What is your user name or e-mail address (edgeimpulse.com)? shahizat005@gmail.

com

? What is your password? [hidden]

? To which project do you want to connect this device? Shakhizat Nurgaliyev / Id

entification and Classification of Rice Varieties

? Select a microphone (or run this command with --disable-microphone to skip sel

ection) tegra-hda-xnx - tegra-hda-xnx

[SER] Using microphone hw:0,0

[SER] Using camera CSI camera starting...

[SER] Connected to camera

[WS ] Connecting to wss://remote-mgmt.edgeimpulse.com

[WS ] Connected to wss://remote-mgmt.edgeimpulse.com

? What name do you want to give this device? jetson

[WS ] Device "jetson" is now connected to project "Identification and Classification of Rice Varieties"

[WS ] Go to https://studio.edgeimpulse.com/studio/116760/acquisition/training to build your machine learning model!

Now, you have successfully installed Edge Impulse on your Nvidia Jetson board.

If everything goes correctly, you should see the following in the Device section of the Edge Impulse:

Now, we can deploy the trained Edge impulse model to NVIDIA Jetson board. For that, go to the Terminal window and enter the below command:

edge-impulse-linux-runner

This will automatically compile your model download the model to your Jetson, and then start classifying. It may take a couple of minutes. The results will be shown in the Terminal window.

edge-impulse-linux-runner

Edge Impulse Linux runner v1.3.3

[RUN] Already have model /home/jetson/.ei-linux-runner/models/116760/v3/model.eim not downloading...

[RUN] Starting the image classifier for Shakhizat Nurgaliyev / Identification and Classification of Rice Varieties (v3)

[RUN] Parameters image size 96x96 px (1 channels) classes [ 'Basmati', 'Jasmine' ]

[RUN] Using camera CSI camera starting...

[RUN] Connected to camera

Want to see a feed of the camera and live classification in your browser? Go to http://YOUR_IP_ADDRESS:4912

boundingBoxes 10ms. []

boundingBoxes 2ms. []

boundingBoxes 5ms. [{"height":8,"label":"Basmati","value":0.9813019633293152,"width":16,"x":56,"y":24}]

You can also launch the video stream on the browser. Open browser on the host machineand type

http://YOUR_IP_ADDRESS:4912.

You can see how it works below:

Instead of detecting bounding boxes like YOLOv5 or MobileNet SSD, FOMO predicts the object’s center. We were able to get up to 22, 9 frames per second, which is fast enough to real-time object detection.

Below you can see an example of the Jetson Nano rice varieties detection demo. It was divided into 4 parts:

Jasmine rice variety was successfully identified.

Basmati rice variety was successfully identified among with previous Jasmine variety.

We make the task a bit more difficult.

We’ve taken our training data and trained a model in the cloud using Edge Impulse platform, and we’re now running that model locally on our Nvidia Jetson board. So, it can be concluded that it was successfully deployed to a single board computer of the Nvidia Jetson board.

Deploying the Trained Model to OpenMV H7 Plus Board

The OpenMV H7 Plus camera is a compact, high resolution, low power computer vision development board. OpenMV H7 Plus is programmed in MicroPython environment using OpenMV IDE.

Go to the Deployment tab of the Edge Impulse. Click your edge devices type of firmware. In my case it is OpenMV Firmware.

And at the bottom of the section, press the button Build. A zip file will be automatically downloaded to your computer. Unzip it.

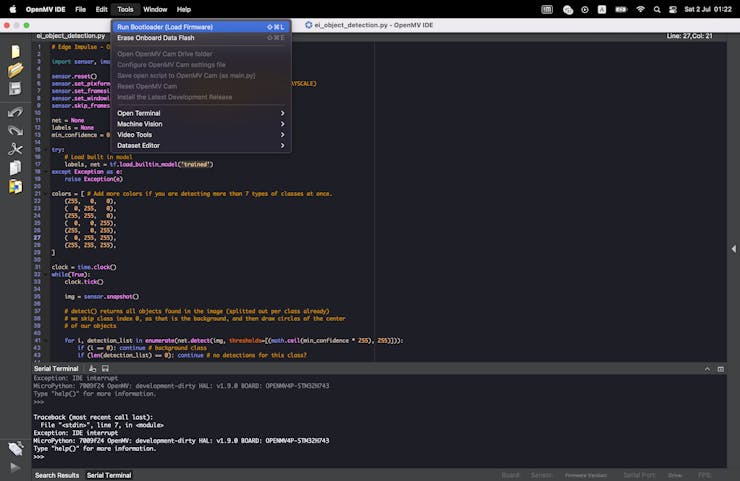

Open the python script ei_object_detection.py using Open OpenMV IDE.

Go to Tools in menu bar and click Run bootloader(Load Firmware) and select bin file.

This may take several minutes for load firmware onto your development board. Once this done, click the Play button in the bottom left corner. Then open serial monitor.

So, here is my demo setup.

1 / 2

This example below shows you how to predict the rice varieties in a live video stream on OpenMV H7 Plus camera board using OpenMV IDE.

As you can see the disadvantage of using OpenMV H7 Plus is the less fps compared to Nvidia Jetson board, however in terms of accuracy, the Jetson board and OpenMV H7 Plus as well achieved great results.

By default, all projects in Edge Impulse web platform are private and accessible only to user, however, you can make a project public. So, you can find this project in this link.

Conclusion

Finally, I have demonstrated a proof-of-concept application of machine learning in the building a TinyML project using the Edge Impulse platform and deploying it on a microcontroller and single board computer. I used Edge Impulse's FOMO algorithm, which is a state-of-the art object detection algorithm, extremely fast and accurate.

Feel free to leave a comment below. Thank you for reading!

References

- Introducing Faster Objects More Objects aka FOMO

- Rice Image Dataset by Murat Koklu

- Object Classification using Edge Impulse TinyML on Raspberry Pi

- Classifying rice